Despite all the good that has come from AI, the risks and harms have been known for decades. As such, countries recently convened for the world’s first AI safety summit. Photo credit: Steve Johnson via Unsplash

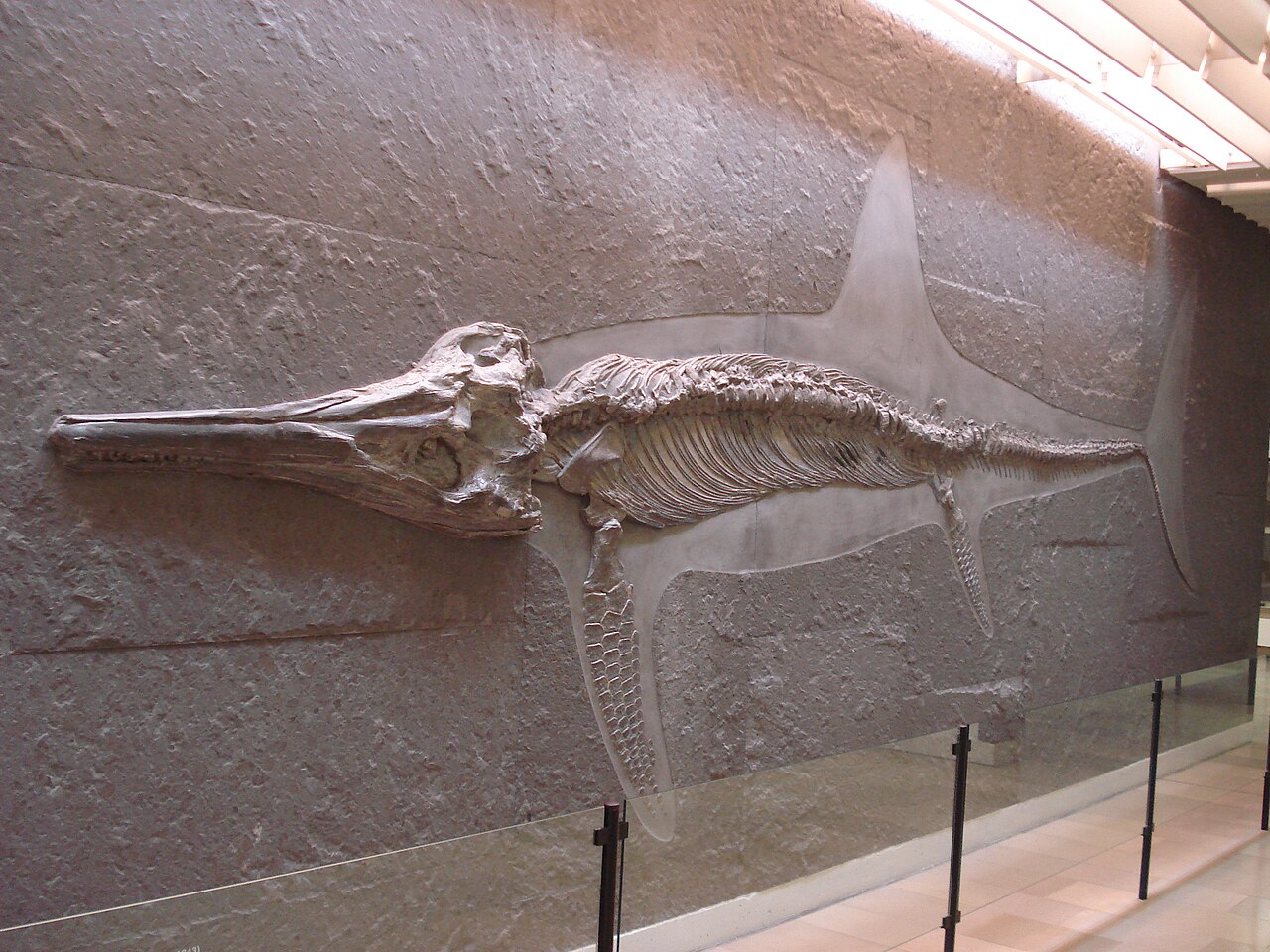

Less than a year after the release of ChatGPT that brought discussions of the dangers and impact of Artificial Intelligence to the forefront of societal discussion, the UK Government convened the world’s first AI Safety summit. It gathered 28 countries, the EU, top companies and civil society together in Bletchley Park, where the ‘father of AI’ Alan Turing had famously worked during World War II. The hope was clearly ambitious, to gain international consensus around the risks, and to move towards a shared understanding of how to solve them.

Risks and Harms from AI Systems

Experts have been warning of the harms and risks from AI systems for decades, and in recent years the litany of examples of AI systems having negative real world impacts have become all the more clear. The AI that powered Google’s algorithm labelled black people as gorillas. AI systems used to sort out mortgages in the USA have been found to lead to discrimination against Latino and African Americans in their outcomes. AI algorithms already drive addiction and extremism through social media platforms, and experts have warned the growing power of AI companies may be similar to the rise to power of social media companies a decade ago. Their use in the justice system has been seen to reinforce inequalities. Furthermore, the production of these AI systems generate harms in of themselves. Large Language Models, like ChatGPT, use vast quantities of data scraped from the internet, which have driven privacy concerns, and many artists in particular have accused these companies of stealing their artwork for models like DALL-E, whilst threatening to put them out of a job. Detoxifying these models has been seen to lead to psychological trauma for the workers involved, so even the measures companies use to make their models ‘safe’ aren’t unproblematic.

The concentration of power through AI systems is another growing concern. AI surveillance has been used to increase the power of its users over others by institutions as diverse as Amazon and the Chinese Government. Big tech companies have been concentrating economic and political power in themselves, with very little accountability thus far for the harm their systems could cause, or for the perceived risks that are borne by society.

Another growing group of experts are concerned with the catastrophic or existential risk that AI systems might pose. These experts, including Turing Award winners for their pioneering work on AI Yoshua Bengio and Geoff Hinton (the highest award in Computer Science), suggest that AI systems may soon become more capable than humans, and our inability to ‘align’ them with human values may cause human disempowerment or extinction. This may be because of direct ‘takeover’ by AI systems, or because AI systems are slowly given more and more power, but then we can never take it back. The worry here is not so much that they will be ‘conscious’, but rather no one really understands how to make these amoral systems ‘ethical’. The developers of these systems are increasingly making them more powerful without obvious ways to curb this power that if, intentionally by the user or not, is used for ill could result in the death of millions or billions of people. Others have proposed more ‘mundane’ ways that an AI driven catastrophe could occur, such as integration of AI systems into nuclear command and control or more speculatively, the use of AI to develop bioweapons.

These experts … suggest that AI systems may soon become more capable than humans, and our inability to align them with human values may cause human disempowerment or extinction.

The Bletchley Declaration

Despite this discussion, political recognition and consensus over these risks has often seemed elusive. As such, the most prominent outcome of the summit was the Bletchley Declaration, signed by all the states in attendance and the EU. This acknowledged a whole litany of risks and harms from AI, and resolved to ‘promote cooperation over the broad range of risks involving AI’. The declaration particularly recognised the ‘potential for serious, even catastrophic, harm’ resulting from frontier AI models and the ‘particularly strong responsibility’ the developers of these models have to ensure their safety.

‘It shows international consensus that frontier AI systems represent significant risks, with the potential for catastrophic harms from future developments if safety is not made a priority,’ said Seán Ó hÉigeartaigh from the Centre for the Study of Existential Risk at the University of Cambridge. However, he also claimed that ‘A focus on the emerging risks of frontier AI must not detract from the crucial work of addressing these concrete harms [human rights issues fairness, accountability, bias mitigation, and privacy protection]’. This need to consider the breadth of risks from AI systems was also emphasised by Jack Stilgoe, a professor of Science and Technology Studies at UCL, who has been critical of much of the focus on existential risk in the AI discussion. On social media, he claimed that he was ‘pleasantly surprised’ by the declaration, where ‘the range of risks [it focuses on] is now much broader [that it originally appeared it might be]’.

‘It shows international consensus that frontier AI systems represent significant risks, with the potential for catastrophic harms from future developments if safety is not made a priority’.

Seán Ó hÉigeartaigh

Towards concrete action?

Despite agreement over the risks, the Summit was light on concrete action. The UK’s AI Safety Institute (the Former AI Taskforce) has been given commitments by AI Companies to be able to access their model weights to carry out safety evaluations. Many of these companies released voluntary safety policies before the summit and played a leading role in discussions at Bletchley Park. Nonetheless, experts suggest these commitments are far from sufficient. A review by 15 academics led by the Leverhulme Centre for the Future of Intelligence at the University of Cambridge found that ‘leading AI companies are not meeting the UK Government’s best practices for frontier AI safety’. Even more pessimistic was Nate Soares of the Machine Intelligence Research Institute who suggested that the commitments ‘all imply imposing huge and unnecessary risks on civilization writ large’.

For many, the action by the US on AI safety has upstaged the UK. The day before the Summit, the Biden Administration issued an Executive Order which has been hailed as some of the most comprehensive action on AI to date, covering issues as diverse as safety tests of AI systems, civil rights, privacy, and workers rights. The Order, amongst other things, instructed research into protecting from the AI biosecurity threats, the development of standards for protecting against fraud, the development of best practices to protect workers from the impact of AI, and providing federal support for privacy preserving technologies. Many of the issues the Executive Order hopes to address, such as equity concerns around the use of AI in the justice system, have been discussed for years by a growing ‘AI Ethics’ community as key harms of AI systems. These problems were largely overlooked at the UK summit which mostly focused on ‘frontier models’ instead.

Problems still remain

Conspicuously missing from discussions at the summit, however, was the suggestion of a moratorium on building more powerful frontier AI models, an idea popularised by a widely signed open letter released by the Future of Life Institute in March. Outside the summit, a small group of protesters from the group “PauseAI” gathered at 6 am holding brandished signs with slogans such as “Just don’t build AGI’. AGI refers to Artificial General Intelligence, the stated aim of many of the leading AI Companies, who are trying to build it often because they believe it could lead to human extinction unless built safely. Whilst much of the discussion at the summit was about this very question of how to ensure we build these systems safely, the PauseAI protestors were not convinced we should even be trying in the first place. ‘What has come out of Bletchley is not sufficient,’ Alistair Stewart, a PauseAI spokesperson, told the Oxford Scientist. ‘A ban on building superintelligence is the only sensible, responsible solution for keeping us safe- anything short of that isn’t good enough’.

For others, however, it wasn’t just the lack of specific policies, but the exclusive nature of the summit gave many serious concerns around accountability and democracy. Only a small number of members of civil society were invited to the summit, with Virdushi Mardi, an attendee from the non-profit REAL-ML saying ‘If this is truly a global conversation, why is it mostly U.S. and U.K. civil society [in attendance]?’. Whilst a more public orientated ‘AI Fringe’ happened simultaneously, these discussions did not seem to filter to those behind the police fences at Bletchley. Meanwhile, the influence of the large technology companies was clearly on show. Associate Professor Carissa Veliz at the Oxford Institute for AI Ethics criticised this, lambasting the prominent role that tech executives played at the summit, who, she believes ‘by definition, cannot regulate themselves’. The recent sacking-and-return of OpenAI CEO Sam Altman has, for some, only highlighted this fact further.

‘The work needed to make AI respectful of human rights and supportive of fairness and democracy is yet to be done,’ Veliz further suggested. Ó hÉigeartaigh shared a similar sentiment, suggesting ‘concrete governance steps…need to follow’. However, since the summit, lobbying by European AI companies has threatened to derail the EU AI Act, highlighting how difficult the fight over the future of AI may be.